Everyone’s building faster. Now what?

Why it’s time for product managers to shift left.

The bottleneck is moving

In February 2026, a solo developer named Pieter Levels posted a thread on x.com describing what happened when he ran an AI coding assistant across his eight live products with full autonomy. In one week, he cleared his entire backlog of features and bug fixes — output that would normally take a small engineering team. He’d been known for shipping fast. This was different. For the first time, he said, he was shipping faster than new work could accumulate (“…10x my normal output.”).

His thread went viral because developers everywhere recognised the same shift in their own work. AI-assisted development isn’t producing an incremental improvement in execution speed. It’s producing a step-change. And that step-change is available to everyone. Your team will get faster. So will every other team in your market. The acceleration is most dramatic in software today, but the upstream shift it creates — toward strategy and judgement as the constraint — applies wherever AI is changing how teams work.

When everyone can build fast, building fast stops being a competitive advantage. It becomes table stakes. And the bottleneck moves somewhere else.

Levels noticed this himself. Once execution was no longer the constraint, his new bottlenecks were cognitive: what to build, how to prioritise across eight products, how to hold the full strategic picture in his head. The constraint had shifted upstream — from the ability to ship to the quality of the decisions that determine what gets shipped.

For product teams, this shift matters enormously. If AI compresses execution, the differentiating work becomes strategy, customer insight, prioritisation, and judgement. The what to build and why becomes the most valuable work you do. And the quality of that work depends entirely on the quality of the information it’s based on.

What humans know that AI can’t see

Every product team carries an enormous amount of implicit knowledge that never makes it into documents. Your strategy document hasn’t been updated since last quarter, but the team was in the all-hands where the CEO shifted priorities. Everyone knows the written strategy is stale. The OKRs don’t quite connect to the roadmap, but the PM and engineering lead had a conversation and they both understand the real priorities. There’s an initiative on the roadmap with no clear strategic rationale, but everyone knows it’s there because of a key client commitment.

Humans compensate for messy information constantly. They fill in gaps from memory, from corridor conversations, from shared context built over months of working together. They read between the lines of a vague strategy document because they were in the room when the intent was discussed. This compensation is so automatic that most teams don’t even realise how much of their decision-making depends on knowledge that exists only in people’s heads.

AI can’t do any of this.

This matters now because product teams are increasingly using AI not just for writing and analysis, but as a reasoning partner for strategic decisions. Teams load their strategy documents, OKRs, roadmaps, and customer research into AI tools and use them to pressure-test priorities, identify gaps, and challenge assumptions. It’s a powerful practice — but it means AI is now reasoning against your strategic information. And it can only work with what it’s given.

AI reads what’s written. If the strategy document says one thing but the team decided something different in a meeting last week, AI doesn’t know about the meeting. If customer evidence exists in someone’s head but hasn’t been written into the research synthesis, it’s invisible. If two documents contradict each other, AI doesn’t know which one the team actually follows.

Every gap in your documented information becomes a structural blind spot for AI. Not a minor inconvenience — a genuine hole in its ability to reason about your product, your priorities, and your decisions. And the more you rely on AI for strategic reasoning and decision support, the more those blind spots shape your outcomes.

From nice-to-have to structurally necessary

Here’s where these two observations converge. Execution speed is being commoditised, which makes upstream decisions the differentiator. Those upstream decisions depend on your information layer — your strategy, evidence, priorities, constraints. That information layer has always been messier than anyone acknowledges. And AI, which is increasingly part of how teams reason about these decisions, cannot compensate for the mess the way humans can.

This isn’t just an AI problem. A team with coherent, current, well-connected strategic information makes better decisions regardless of tooling. That’s always been true. Clear documentation of strategy, evidence, and priorities helps any team — it reduces miscommunication, speeds up onboarding, makes alignment discussions productive rather than circular. Good information hygiene is good practice, full stop. And coherence alone isn’t enough — the information also needs to be grounded in reality. Perfectly coherent documents that describe a strategy built on untested assumptions are still a problem. The goal is information that is coherent, current, and genuinely evidence-based.

But AI changes the stakes. In a world where your competitors are applying AI to well-maintained strategic information and sharp decision-making, gaps in your own information layer become gaps in your competitive position. AI applied to coherent data and clear strategy will outperform AI applied to stale documents and contradictory priorities. Every time. No amount of AI sophistication will compensate for holes in what it can see.

Information coherence has moved from a nice-to-have to a structural requirement. Not because AI demands it, but because the competitive landscape now rewards it in a way it didn’t before.

A metric that’s been missing

Organisations treat code quality as a first-class metric. They have linters, test coverage targets, review processes. They treat system uptime as a first-class metric — dashboards, alerting, SLAs. They treat customer satisfaction as a first-class metric — NPS, CSAT, regular measurement cadences.

Nobody treats the coherence of their strategic information as a metric. It’s not measured. It’s not tracked. There’s no dashboard, no threshold that triggers a review. It’s just assumed to be “fine” or acknowledged to be “a bit messy” with a shrug. In a world where this information increasingly determines competitive outcomes, that’s a remarkable blind spot.

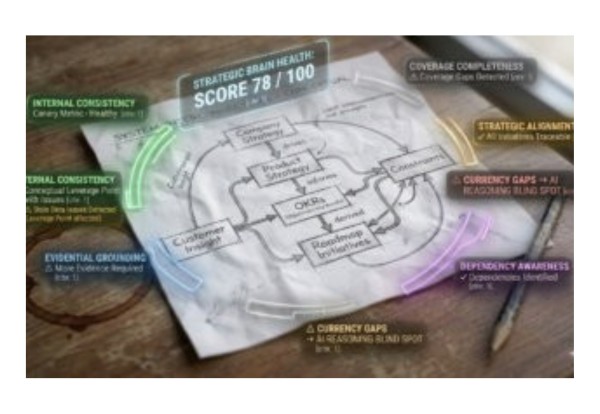

We’ve been exploring what we call an organisational brain health score, a structured way to assess how well a team’s strategic information hangs together. I’m sharing the framework here because we think it’s an interesting concept worth exploring. It measures six dimensions:

- Strategic Alignment: can each initiative be traced to a strategic objective, or are there orphaned projects with no clear rationale?

- Evidential Grounding: are priorities supported by actual customer evidence, or built on untested assumptions?

- Internal Consistency: do your documents agree with each other, or does the strategy say one thing while the OKRs pursue another?

- Currency and Freshness: do artefacts reflect current reality, or are they describing last quarter’s world?

- Coverage Completeness: are all strategic areas addressed, or are there critical gaps?

- Dependency Awareness: are cross-team dependencies and risks identified, or will surprises emerge at the worst moment?

Product leaders will recognise these items. Most will also recognise that they’ve never assessed them systematically, relying instead on instinct or the occasional discovery that something important was out of date. The framework works whether you use AI to perform the assessment or simply use the six dimensions as a structured agenda for a leadership review. Either way, it replaces vague unease with a specific diagnosis.

Treating information coherence as a first-class metric means measuring it regularly, tracking it over time, having thresholds that trigger review, and making it visible to the team as a shared health indicator. Not surveillance — shared awareness. When the score is healthy, your team’s decisions are built on solid ground. When it drops, you know where to look before the consequences arrive downstream.

Early evidence

We tested this framework in a controlled experiment. We created a complete set of canonical strategic documents — the agreed, authoritative versions of strategy, OKRs, roadmap, customer research, and constraints — for a fictional company, then produced five variants, each with a specific type of coherence failure deliberately introduced. An AI assessor (Claude, operating under a structured methodology with no prior knowledge of what had been changed) independently scored each document set across all six dimensions.

To be transparent: this was a simulated environment with synthetic documents and an AI assessor, not a live team audit by human evaluators. It’s early-stage validation, not proof at scale. But the results were clear: every planted coherence failure was detected, and each produced a distinct diagnostic profile that identified the type of problem and where it lived. Two findings stood out as most actionable:

Internal Consistency is the canary. It was the most volatile dimension — the first to degrade in nearly every variant. If you assess only one thing, assess whether your documents agree with each other.

Currency and Freshness is the highest-leverage intervention. When documents go stale, internal consistency degrades with them. A synchronised update cadence — reviewing and updating canonical documents together, on a regular cycle — prevents most other coherence problems from developing.

Where to start

There’s a practical step you can take this week that costs nothing and teaches you a great deal. Gather your team’s core strategic documents into one place: company strategy, product strategy, OKRs, current roadmap, customer research synthesis, and known constraints. They may already exist. They may need updating. That’s fine — the goal isn’t perfect documentation. Product management is messy, political, and full of trade-offs, and it always will be. The goal is knowing where your biggest coherence gaps are and which ones are actively undermining your decisions. The act of curating what’s canonical — deciding which documents are your team’s authoritative source of truth — is itself enormously valuable. Most teams have never forced the question: is our strategy document still current? Do we actually agree on these priorities?

Then use an AI assistant with this context for a real prioritisation discussion (be sure to use company-approved AI to protect your confidential data). Ask it to trace which initiatives connect to which strategic objectives. Ask it to find contradictions. Ask it where customer evidence is missing. What you’ll discover is that your documents probably disagree with each other more than you realised. That’s your coherence problem becoming visible and it should be specific, concrete, and fixable.

Beyond this, two shifts in practice make the biggest difference. First, treat your strategic artefacts as deliverables, not paperwork. They’re not administrative overhead — they’re the infrastructure your decisions run on, and increasingly, the context your AI tools reason against. Second, establish a synchronised review cadence: when all canonical documents are updated together, coherence is maintained almost as a side effect.

The world in which execution speed is the differentiator is ending. What replaces it is a world where the quality of your strategic thinking, the clarity of your customer insight, and the coherence of the information that connects them determines who wins. That’s true whether your team uses AI heavily, lightly, or not at all. AI makes it urgent and measurable. But the underlying principle is simple: steer first, then accelerate. The teams that get their strategic information right won’t just get more from AI — they’ll make better decisions at every level. And in a world where everyone can move fast, that’s the advantage that compounds.

Wishing you success,

Eddie Pratt — Managing Director, Product Focus.

P.S. Lots of insights for PMs here: 2026 Survey of the Product Management Profession.

Learn about our product management training at https://www.productfocus.com/